February 26, 2019

The application of facial recognition technology is becoming commonplace in modern life, from identifying who is coming and going in an airport to ensuring the owner of a phone is the one unlocking it.

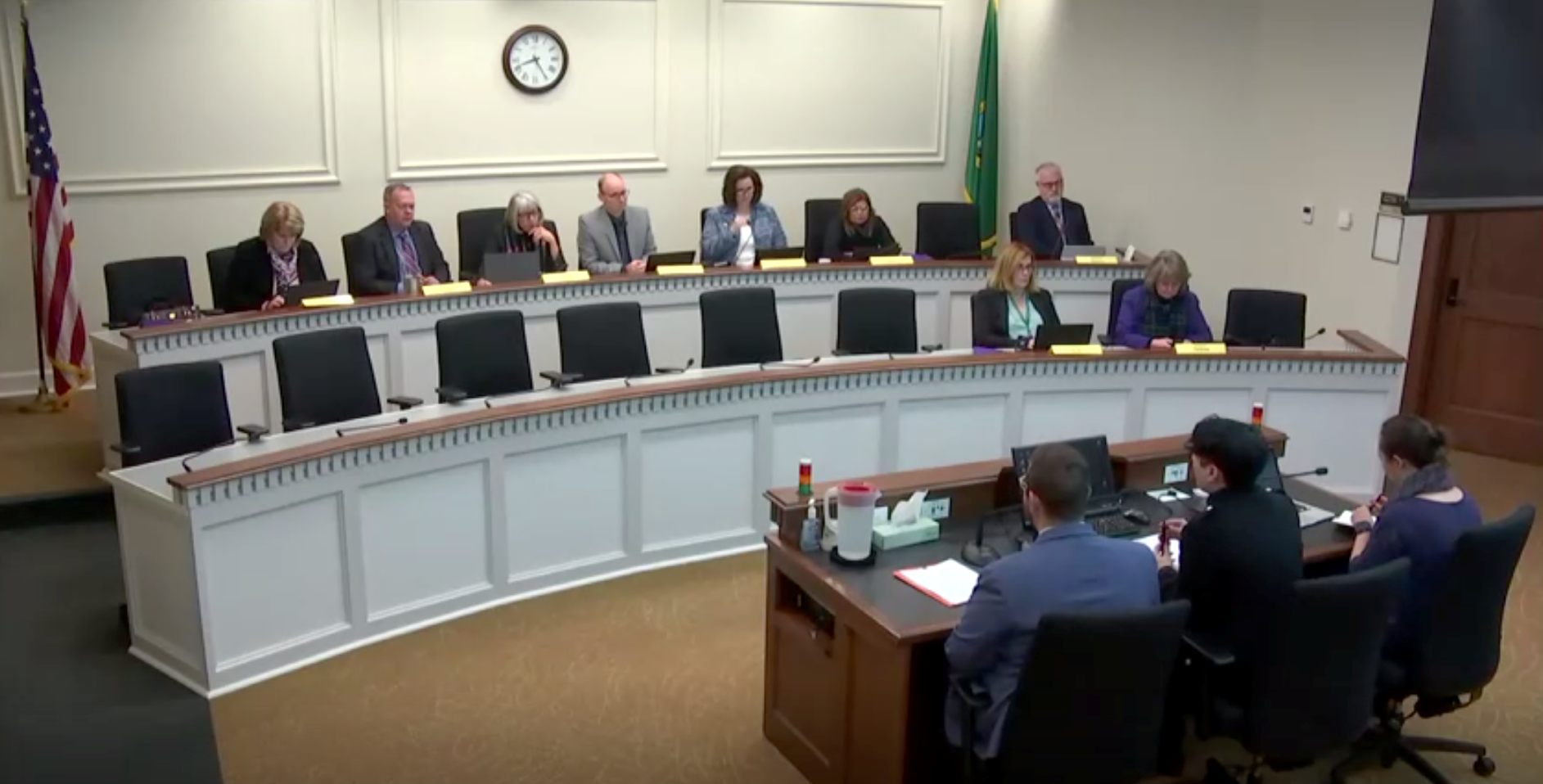

In February 2019, HCDE PhD student Os Keyes testified to the Washington State House of Representatives as an expert witness in support of House Bill 1654, a bill to prohibit state and local government from using facial recognition technology.

In February 2019, HCDE PhD student Os Keyes testified to the Washington State House of Representatives as an expert witness in support of House Bill 1654, a bill to prohibit state and local government from using facial recognition technology.

But according to Os Keyes, a PhD student in the Department of Human Centered Design & Engineering, these seemingly-innocuous security uses mask deep risks—risks made more likely by a lack of regulation.

“We know that tools like this that rely on data-driven algorithms have extreme potential for bias, especially toward people who are already vulnerable,” said Keyes. ”And we currently have no regulations on facial recognition technology and who can use it.”

Keyes’ background is in data science, and they have spent several years writing machine learning algorithms. Since joining academia, they have examined the history and use of facial recognition. Keyes’ recent research looked at Automatic Gender Recognition (AGR), a subfield of facial recognition that uses algorithms to identify the gender of individuals. Unpacking the way the technology functions, Keyes concluded that AGR fundamentally ignores—and cannot adequately include—transgender lives and identities.

In their paper on the topic, The Misgendering Machines: Trans/HCI Implications of Automatic Gender Recognition, Keyes describes the dangerous consequences this has: "If systems are not designed to include trans people, inclusion becomes an active struggle: individuals must actively fight to be included in things as basic as medical systems, legal systems or even bathrooms. This creates space for widespread explicit discrimination, which has (in, for example, the United States) resulted in widespread employment, housing and criminal justice inequalities, increased vulnerability to intimate partner abuse and, particularly for trans people of colour, increased vulnerability to potentially fatal state violence."

In February 2019, Keyes was invited by the ACLU to testify to the Washington State House of Representatives as an expert witness in support of House Bill 1654, a bill to prohibit state and local government from using facial recognition technology.

To the House Committee on Innovation, Technology & Economic Development, Keyes presented their findings of how facial recognition technology has a disproportionate impact on trans people and/or people of color. Keyes explained that when deployed, facial recognition technology is more likely to flag trans people as unexpected, opening them up to the potential for unpleasant or even violent outcomes.

What does an ethical piece of facial recognition technology look like? Keyes isn’t sure it’s possible, arguing that the technology is fundamentally one of control and discrimination. In collaboration with Nikki Stevens, a PhD student at Arizona State University, they are writing the first critical history of facial recognition. “If we are trying to reform facial recognition technology, we need to uncover its past. We need to ask why were these technologies developed and what were they intended to do? What is its legacy and what are modern implementations - and reform attempts - inheriting?”

In the meantime, Keyes has a message for technology designers: “Instead of just developing a new technology that you can envision a good use for, make sure that you are looking at the infrastructure already in place that this technology will interface with. Make sure that you look at the way your piece of technology can be co-opted. Make sure you look at the politics that exist within the context of your new technology. And finally, make sure that what you build supports the individual autonomy of everyone who will interact with it.”

Related news

- Facial Recognition Software Regularly Misgenders Trans People | Motherboard

- Artificial intelligence’s diversity problem | The Boston Globe

- Microsoft wants to stop AI's 'race to the bottom' | Wired